All Eyes On HBM

Mar 17, 2025

Leave a message

Recently, Samsung announced that it will launch its first mobile product equipped with LPW DRAM memory in 2028, LPW DRAM, also known as low-latency wide I/O (LLW) or "mobile HBM", by using a new packaging technology of vertical wire bonding, stacking LPDDR DRAM, greatly increasing I/O interfaces, can reduce power consumption and improve performance, which has attracted much attention in the industry.

As we all know, with the rise of AI in recent years, the data center and server market has reached an unprecedented level of memory performance requirements.

0040-02544 Upper Body, Dps Metal

Major memory manufacturers such as SK hynix, Samsung Electronics, and Micron have incorporated HBM into their core product lines, seeing it as the key to promoting technological innovation and market competition.

Now, storage giants are planning to further expand the use of HBM chips in an attempt to bring them from the data center to the automotive and mobile device markets.

HBM enters the field of smart cars

In the era of large models, it has been the consensus in the industry that AI chips are equipped with HBM memory, and the automotive industry has gradually begun to adopt HBM memory.

With the evolution of the "New Four Modernizations" trend, the demand for real-time data processing, high-resolution image processing, and data storage for smart cars is increasing, especially the multiple new systems of smart cars such as advanced driver assistance systems, intelligent cockpit systems, and infotainment systems have brought a high demand for on-board chip storage power, which is expected to be widely used in in-vehicle computing platforms.

In addition, the adoption of in-vehicle end-to-end models is likely to become more and more common in the future, which provides a large number of opportunities for HBM's on-board applications.

In terms of current progress, the application of HBM in the automotive field is still in its infancy, but some important breakthroughs have been made. SK hynix's HBM2E has been applied to Google's Waymo autonomous car, marking HBM's official entry into the automotive field and highlighting the growing importance of high-performance memory in the automotive field.

SK hynix, as the exclusive supplier of advanced memory technology for Waymo's autonomous vehicles, has independently produced HBM2E specifically for automotive applications to meet the stricter quality requirements for automotive chips. As the first HBM chip manufacturer in the market to provide HBM chips that meet the stringent AEC-Q automotive standards, SK hynix's automotive-grade HBM2E products have demonstrated outstanding performance: capacities of up to 8GB, transfer speeds of up to 3.2Gbps, and an astonishing bandwidth of 410GB/s, setting a new benchmark for the industry.

Taking this as an opportunity, SK hynix is actively expanding its cooperation network with NVIDIA, Tesla, and other giants in the field of autonomous driving solutions, and is actively looking for partners to install HBM in autonomous vehicles, aiming to further expand its business from the existing AI data center market to the booming autonomous vehicle market.

Although Samsung has not directly disclosed the progress of automotive HBM, it is likely to be indirectly participating in the autonomous driving ecosystem through its cooperation with NVIDIA.

On the whole, with the increasingly fierce competition in the smart car market, car companies need to enhance their competitiveness by improving the intelligence level of vehicles, and HBM technology is undoubtedly the key to achieving this goal. At present, a number of car companies are also actively seeking cooperation opportunities with HBM manufacturers.

Some experts said that in the long run, HBM will become the mainstream, and if HBM is adopted by leading companies such as Tesla, this trend will accelerate.

According to data from market research institutions, the global automotive memory chip market will be worth $4.76 billion in 2023 and is expected to reach $10.25 billion by 2028.

HBM, going mobile

In addition to the automotive market, with the rapid development of AI, 5G and other technologies, mobile devices are becoming more and more powerful, and the requirements for memory performance are also increasing. From running complex AI applications to enabling smooth multitasking to supporting high-definition video and large-scale games, mobile devices need memory that can provide higher bandwidth and lower latency.

Taking smartphones as an example, with the popularity of AI photography, AI voice assistants and other functions, mobile phones need to process a large amount of data in a short period of time. When taking an AI-optimized photo, the phone needs to analyze and process the image in real time, which requires the memory to be able to read and store the image data quickly. However, although traditional LPDDR memory can meet the needs of daily applications to a certain extent, it is gradually unable to meet these high-performance requirements.

In areas such as laptops and wearables, there is also an urgent need for high-performance memory. When running large-scale office software and video editing, laptops need memory with efficient data processing capabilities. Wearable devices, such as smart watches, also need memory to quickly process sensor data when implementing functions such as health monitoring and exercise tracking.

The advent of HBM opens up new possibilities to meet these needs. This type of HBM for mobile devices, also known as "mobile HBM", has characteristics similar to those used in current servers.

HBM uses advanced 3D stacking technology to connect multiple DRAM chips vertically via through-silicon (TSV), which greatly increases memory bandwidth. This unique design enables HBM's data transfer rate to reach hundreds of GB/s, which is several times higher than that of traditional DDR memory, which can fully meet the needs of AI computing for rapid processing of massive data, effectively reduce the delay of data transmission, and greatly improve the training efficiency.

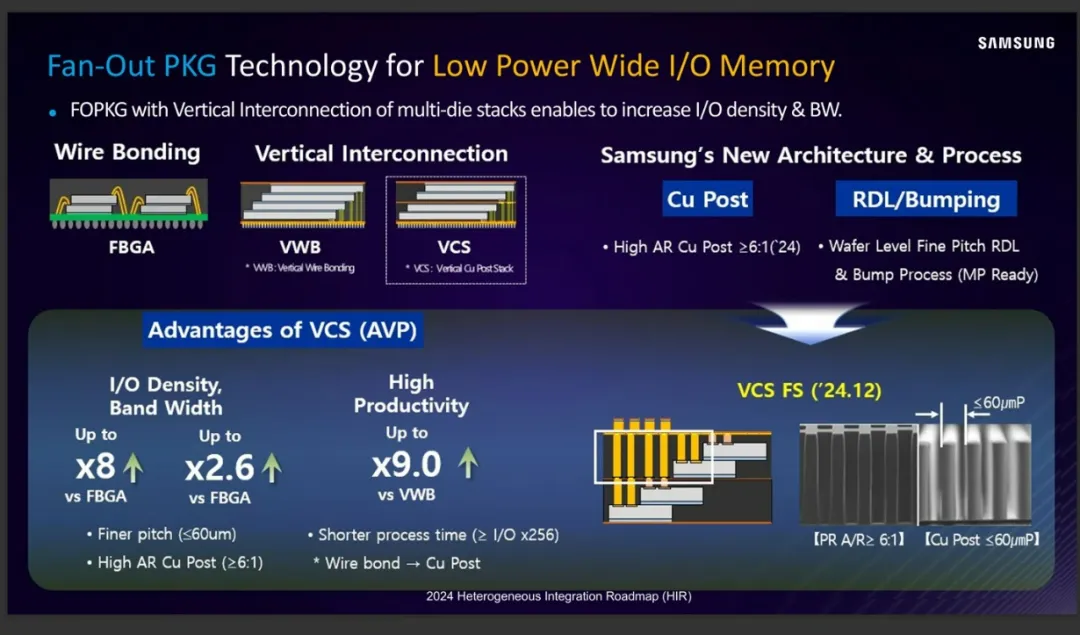

Mobile HBM has the same stacking concept, but it is a method of stacking LPDDR DRAM in a stepped pattern and then connecting it to a substrate with vertical wires. Specifically, Samsung Electronics is developing the technology under the name "VCS", while SK hynix is developing the technology under the name "VFO". The advantages are high power efficiency, low power consumption, and the ability to provide more IO data pins.

The biggest difference between mobile HBM and LPDDR is whether it is "custom memory". LPDDR is a general-purpose product that can be used in batches once mass-produced; Whereas, mobile HBM is a customized product that reflects the application and the customer's requirements. Since the mobile HBM is connected to the processor in a different pin position, it needs to be optimized for each customer's product before mass production.

The industry sees mobile HBM as the next generation of semiconductors and is focusing on its development. Samsung and SK hynix, as the two giants in the field of memory, have spared no effort in the research and development and layout of mobile HBM technology.

Samsung: Launched LPD DRAM in 2028

According to previous reports, Samsung's LPW DRAM, a product with similar technology, has low latency and bandwidth performance of up to 128GB/s, while consuming only 1.2pJ/b, and is planned to achieve commercial mass production in 2025-2026.

It should be noted that the mobile HBM chip represented by LPDDR is not suitable for the same TSV connection scheme as HBM due to its small size. At the same time, the high cost and low yield characteristics of the HBM manufacturing process cannot meet the demand for high-capacity mobile DRAM.

As a result, Samsung Electronics and SK hynix have adopted another advanced packaging method.

Samsung Electronics' VCS (Vertical Copper Pillar Stacking) method is in which DRAM chips cut from wafers are stacked in a step shape, hardened with epoxy material, and then drilled and filled with copper.

According to Samsung Electronics, the VCS advanced packaging technology has an 8-fold increase in I/O density and 2.6 times the bandwidth compared to traditional wire bonding, and a 9-fold increase in production efficiency compared to VWB vertical wire bonding. According to the plan, Samsung Mobile HBM will be launched from the second half of 2025 to 2026.

However, judging from the latest disclosures so far, there seems to be a new update on this development.

At the recent ISSCC 2025, Song Jae-hyuk, CTO of Samsung Electronics' DS Division and head of the Semiconductor Research Laboratory, revealed that Samsung plans to launch mobile devices equipped with LPW DRAM (LP Wide I/O DRAM), or "Mobile HBM", in 2028.

LPW is also known as LLW or "custom memory". With its emergence as the next generation of memory, the industry as a whole is using various names while developing standards. But regardless of the name, the goal is the same: to increase the number of I/O channels and reduce the speed of each channel, while achieving enhanced performance and lower power consumption. In addition, the technology is available in a Vertical Wire Bonding (VWB) package that converts the signal path from a bend to a straight line.

In terms of specific performance, LPW DRAM stacks LPDDR DRAM to greatly increase the number of I/O interfaces to achieve the dual goals of improving performance and reducing energy consumption. Its bandwidth can reach more than 200GB/s, which is 166% higher than the existing LPDDR5x. At the same time, its power consumption is reduced to 1.9pJ/bit, which is 54% lower than LPDDR5x. The application of this technology will enable mobile devices to have a smoother experience when running large-scale games, video editing, and other high-performance applications, while extending the battery life of the device. It is reported that Samsung announced at the "Semicon Taiwan" event held in September last year that the performance of LPW DRAM is 133% higher than that of LPDDR5X, which means that the performance target has been increased in less than six months.

This series of breakthroughs is bound to provide more efficient and reliable memory support for the growing number of on-device AI applications.

SK hynix: VFO technology accelerates mobile HBM

Unlike Samsung's VCS, SK hynix opted for copper wires instead of copper pillars. Unlike Samsung Electronics in terms of connecting components and process sequence, it uses copper wires to connect stacked DRAMs, and then injects epoxy resin into a blank space to harden them, enabling the stacking of mobile DRAM chips

This technology is called "VFO (Vertical Line Fan-Out)" and is similar to the current method of using MUF materials to fill the gaps between DRAM stacks to achieve HBM.

SK hynix pointed out that VFO technology combines FOWLP (Wafer Level Packaging) and DRAM stacking technologies, and VFO technology significantly shortens the transmission path of electrical signals between multiple layers of DRAM through vertical connection, shortens the line length to less than 1/4 of conventional memory, and improves energy efficiency by 4.9%. This method increases heat dissipation by 1.4%, but reduces package thickness by 27%.

It can be seen that AI applications on the device side of mobile devices require high-bandwidth and high-speed storage as support, and the application of HBM in smartphones, tablets, and notebooks is becoming a trend. According to some data, it is predicted that by 2027, the market share of AI mobile phones integrated with HBM will exceed 50%, and tablets and laptops will gradually follow.

Once SK hynix and Samsung Electronics make breakthroughs in LPDDR stacking and chip packaging, mobile HBM is undoubtedly a good choice.

The differences in the technology strategies of the two companies are worth paying attention to, as they could change the landscape of the mobile HBM market. Just as the differences in HBM technology in the data center market determine the dominance of the AI market, mobile HBM is attracting attention as an AI memory for smartphones, PCs, XR headsets, and other devices, and is expected to have a direct impact on the AI market on mobile devices.

A semiconductor industry veteran said, "Samsung focuses on high-bandwidth design (LP Wide I/O) and prioritizes product perfection; SK hynix, on the other hand, is focusing on low power consumption and thinning (VFO), prioritizing cost-effectiveness, and it is too early to tell who has more market value, as each customer has different requirements. "The competition between Samsung and SK hynix in the field of mobile HBM has shifted from technology research and development to a comprehensive competition with customers in terms of mass production capabilities and customer ecosystems. Samsung narrowed the gap through process innovation and capacity expansion, while SK hynix maintained its lead with yield advantage and customized strategy. With the mass production of HBM4 after 2025, the two manufacturers will accelerate the decentralization of technology to mobile terminals, driving the performance revolution of smartphones, PCs, and AR/VR devices.

It has also been reported that mobile HBM is likely to be produced in a customized form and provided to smartphone chip manufacturers, which will change the previous supplier-centric model to a demand-side-centric approach. Just like SK hynix previously supplied customized low-power DRAM for Apple's headset "Vision Pro". But it's not clear how customization differs from company to company, as mobile HBM is still in the R&D phase. In fact, when HBM is used in automobiles, SK hynix's HBM2E, which is specifically produced for automobiles, is customized for the needs of specific fields.

Send Inquiry